News

Watch Google's Artificial Intelligence Synthesizer In Action

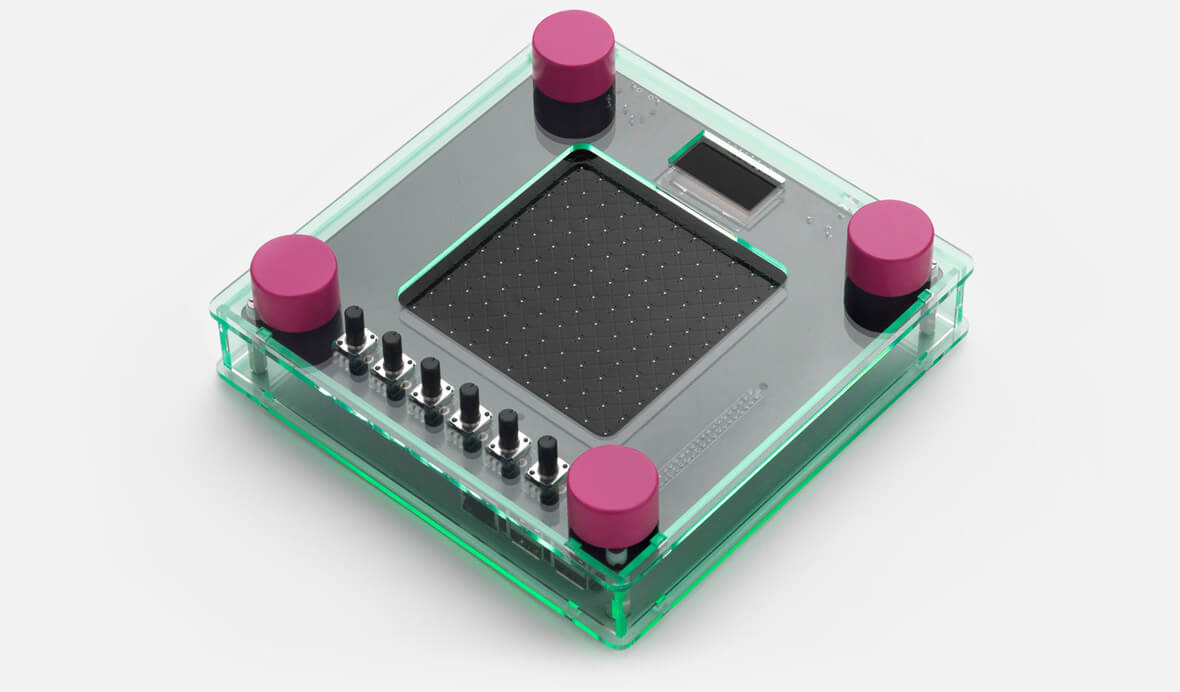

In this video from Google featuring London-based producer Hector Plimmer, we get a demo of NSynth Super, a hardware interface that uses Google’s NSynth musical machine learning algorithm.

The NSynth algorithm stems from Magenta, Google’s AI project that explores the relationship between music/art and machine learning. There are heaps of fascinating information provided on the NSynth website, and we will compile some of this below.

How Does NSynth Super Work?

- For this experiment, 16 original source sounds across a range of 15 pitches were recorded in a studio and then input into the NSynth algorithm, to precompute the new sounds.

- The outputs, over 100,000 new sounds, were then loaded into the experience prototype.

How Does The NSynth Algorithm Work?

- NSynth uses deep neural networks to generate sounds at the level of individual samples. Learning directly from data, NSynth provides artists with intuitive control over timbre and dynamics, and the ability to explore new sounds that would be difficult or impossible to produce with a hand-tuned synthesizer.

If you’re interested in creating your own NSynth Super synthesizer, you can access the open-source code via Github, as well as learn more about each of the steps involved in using the NSynth algorithm.

Next: Beat Box Machine Featuring Safety Pins, Lightbulbs, Wires And More