News

Sound Synthesis Is Changing With Google's New AI, NSynth

The process of sound synthesis is beginning to take new forms. NSynth, short for Neural Audio Synthesis, is a new AI instrument being developed by Google’s Magenta project that is designed to craft entirely-new sounds using neural network algorithms stemming from artificial intelligence.

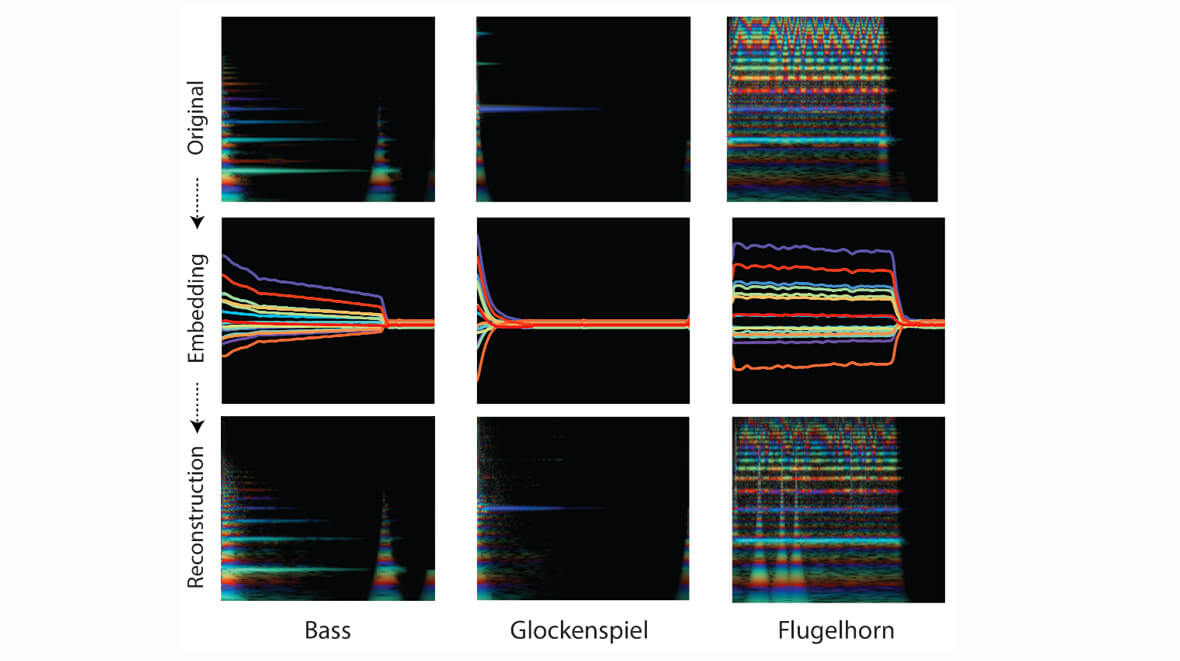

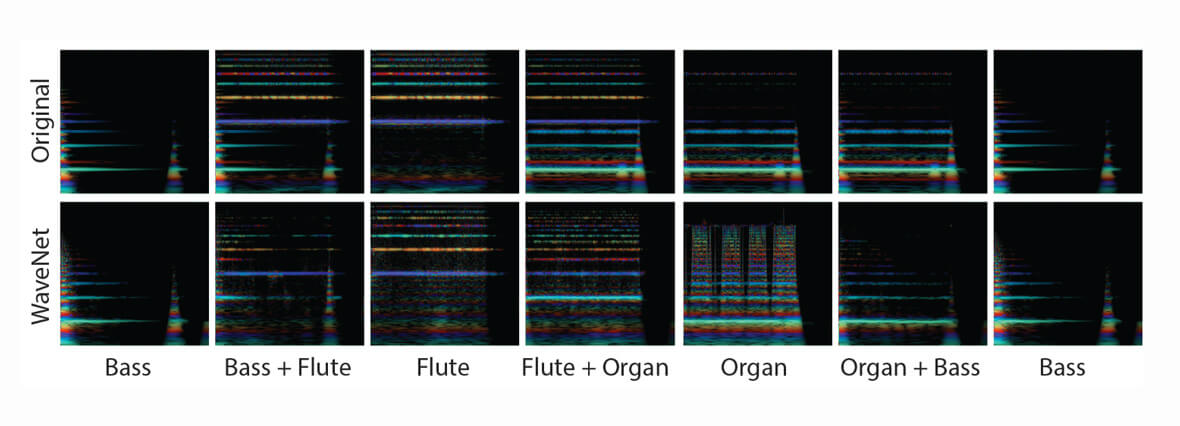

Unlike a traditional synthesizer which generates audio from hand-designed components like oscillators and wavetables, NSynth uses deep neural networks to generate sounds at the level of individual samples.

NSynth is meant to model the ways in which instruments work, from chromatic capabilities to sonic resonances that an instrument can produce in an acoustic space. To do this, it uses an autoencoder known as Wavenet, an algorithm that produces sound based upon an immense library of sampled instruments.

If you’re interested in learning more about NSynth, you can visit this website to gain a thorough understanding of how it works and what it’s designed to do.